The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTMeet The EVGA GeForce GTX 1660 Ti XC Black GAMING

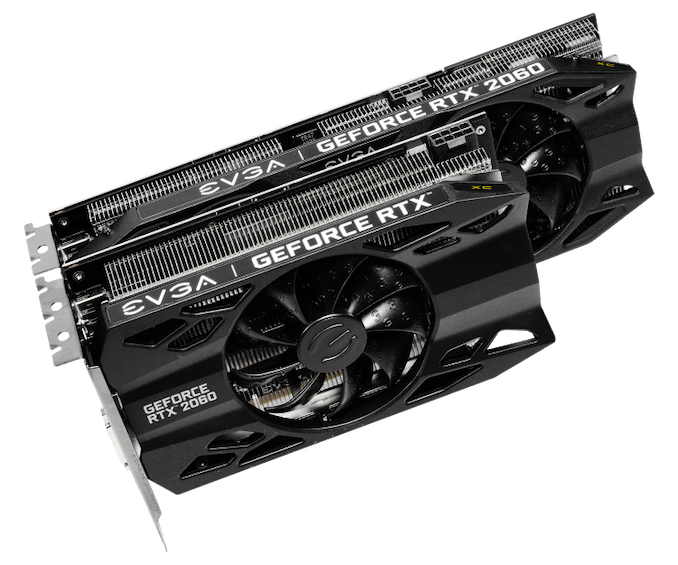

As a pure virtual launch, the release of the GeForce GTX 1660 Ti does not bring any Founders Edition model, and so everything is in the hands of NVIDIA’s add-in board partners. For today, we look at EVGA’s GeForce GTX 1660 Ti XC Black, a 2.75-slot single-fan card with reference clocks and a slightly increased TDP of 130W.

| GeForce GTX 1660 Ti Card Comparison | ||||

| GTX 1660 Ti Ref Spec | EVGA GTX 1660 Ti XC Black GAMING | |||

| Base Clock | 1500MHz | 1500MHz | ||

| Boost Clock | 1770MHz | 1770MHz | ||

| Memory Clock | 12Gbps GDDR6 | 12Gbps GDDR6 | ||

| VRAM | 6GB | 6GB | ||

| TDP | 120W | 130W | ||

| Length | N/A | 7.48" | ||

| Width | N/A | 2.75-Slot | ||

| Cooler Type | N/A | Open Air | ||

| Price | $279 | $279 | ||

Seeing as the GTX 1660 Ti is intended to replace the GTX 1060 6GB, EVGA’s cooler and card design is new and improved compared to their Pascal cards, and was first introduced with the RTX 20-series as they rolled out the iCX2 cooling design and new “XC” card branding, complementing their existing SC and Gaming series. As we’ve seen before, the iCX platform is comprised of a medley of features, and some of the core technology is utilized even when the full iCX suite isn’t. For one, EVGA reworked their cooler design with hydraulic dynamic bearing (HDB) fans, offering lower noise and higher lifespan than sleeve and ball bearing types, and this is present in the EVGA GTX 1660 Ti XC Black.

In general, the card essentially shares the design of the RTX 2060 XC, complete with those new raised EVGA ‘E’s on the fans, intended to improve slipstream. The single-fan RTX 2060 XC was paired with a thinner dual-fan XC Ultra variant, and in the same vein the GTX 1660 Ti XC Black is a one-fan design that essentially occupies three slots due to the thick heatsink and correspondingly taller fan hub. Being so short, though, makes the size a natural fit for mini-ITX form factors.

As one of the cards lower down the RTX 20 and now GTX 16 series stack, the GTX 1660 Ti XC Black also lacks LEDs and zero-dB fan capability, where fans turn off completely at low idle temperatures. The former is an eternal matter of taste, as opposed to the practicality of the latter, but both tend to be perks of premium models and/or higher-end GPUs. Putting price aside for the moment, the reference-clocked GTX 1660 Ti and RTX 2060 XC Black editions are the more mainstream variant anyhow.

Otherwise, the GTX 1660 Ti XC Black unsurprisingly lacks a USB-C/VirtualLink output, offering up the mainstream-friendly 1x DisplayPort/1x HDMI/1x DVI setup. Although the TU116 GPU still supports VirtualLink, the decision to implement it is up to partners; the feature is less applicable for cards further down the stack, where cards are more sensitive to cost and are less likely to be used for VR. Additionally, the 30W USB-C controller power budget could be significant amount relative to the overall TDP.

And on the topic of power, the GTX 1660 Ti XC Black’s power limit is actually capped at the default 130W, though theoretically the card’s single 8-pin PCIe power connector could supply 150W on its own.

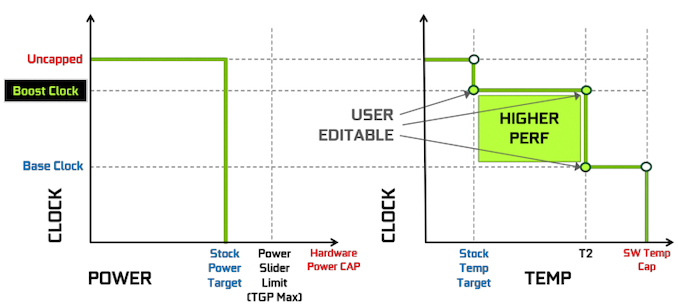

The rest of the other GPU-tweaking knobs are there for your overclocking needs, and for EVGA this goes hand-in-hand with Precision, their overclocking utility. For NVIDIA’s Turing cards, EVGA released Precision X1, which allows modifying the voltage-frequency curve and scanning for auto-overclocking as part of Turing’s GPU Boost 4. Of course, NVIDIA’s restriction of actual overvolting is still in place, and for Turing there is a cap at 1.068v.

157 Comments

View All Comments

Psycho_McCrazy - Tuesday, February 26, 2019 - link

Given that 21:9 monitors are also making great inroads into the gamer's purchase lists, can benchmark resolutions also include 2560.1080p, 3440.1440p and (my wishlist) 3840.1600p benchies??eddman - Tuesday, February 26, 2019 - link

2560x1080, 3440x1440 and 3840x1600That's how you right it, and the "p" should not be used when stating the full resolution, since it's only supposed to be used for denoting video format resolution.

P.S. using 1080p, etc. for display resolutions isn't technically correct either, but it's too late for that.

Ginpo236 - Tuesday, February 26, 2019 - link

a 3-slot ITX-sized graphics card. What ITX case can support this? 0.bajs11 - Tuesday, February 26, 2019 - link

Why can't they just make a GTX 2080Ti with the same performance as RTX 2080Ti but without useless RT and dlss and charge something like 899 usd (still 100 bucks more than gtx 1080ti)?i bet it will sell like hotcakes or at least better than their overpriced RTX2080ti

peevee - Tuesday, February 26, 2019 - link

Do I understand correctly that this thing does not have PCIe4?CiccioB - Thursday, February 28, 2019 - link

No, they have not a PCIe4 bus.Do you think they should have?

Questor - Wednesday, February 27, 2019 - link

Why do I feel like this was a panic plan in an attempt to bandage the bleed from RTX failure? No support at launch and months later still abysmal support on a non-game changing and insanely expensive technology.I am not falling for it.

CiccioB - Thursday, February 28, 2019 - link

Yes, a "panic plan" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have.

They didn't know that the concurrent would have played with the only weapon it was left to it to battle, that is prize as they could not think that the concurrent was not ready with a beefed up architecture capable of the sa functionalities.

So, yes, they panicked for sure. They were not prepared to anything of what is happening,

Korguz - Friday, March 1, 2019 - link

" that required about 3 years to create the chips.3 years ago they already know that they would have panicked at the RTX cards launch and so they made the RT-less chip as well. They didn't know that the RT could not be supported in performance with the low number of CUDA core low level cards have. "

and where did you read this ? you do understand, and realize... is IS possible to either disable, or remove parts of an IC with out having to spend " about 3 years " to create the product, right ? intel does it with their IGP in their cpus, amd did it back in the Phenom days with chips like the Phenom X4 and X3....

CiccioB - Tuesday, March 5, 2019 - link

So they created a TU116, a completely new die without RT and Tensor Core, to reduce the size of the die and lose about 15% of performance with respect to the 2060 all in 3 months because they panicked?You probably have no idea of what are the efforts to create a 280mm^2 new die.

Well, by this and your previous posts you don't have idea of what you are talking about at all.