The NVIDIA GeForce GTX 650 Ti Review, Feat. Gigabyte, Zotac, & EVGA

by Ryan Smith on October 9, 2012 9:00 AM EST

"Once more into the fray.

Into the last good fight I'll ever know."

-The Grey

At a pace just shy of a card a month, NVIDIA has been launching the GeForce 600 series part by part for over the last half year now. What started with the GeForce GTX 680 in March and most recently saw the launch of the GeForce GTX 660 will finally be coming to an end today with the 8th and what is likely the final retail GeForce 600 series card, the GeForce GTX 650 Ti.

Last month we saw the introduction of NVIDIA’s 3rd Kepler GPU, GK106, which takes its place between the high-end GK104 and NVIDIA’s low-end/mobile gem, GK107. At the time NVIDIA launched just a single GK106 card, the GTX 660, but of course NVIDIA never launches just one product based on a GPU – if nothing else the economics of semiconductor manufacturing dictate a need for binning, and by extension products to attach to those bins. So it should come as no great surprise that NVIDIA has one more desktop GK106 card, and that card is the GeForce GTX 650 Ti.

The GTX 650 Ti is the aptly named successor to 2011’s GeForce GTX 550 Ti, and will occupy the same $150 price point that the GTX 550 Ti launched into. It will sit between the GTX 660 and the recently launched GTX 650, and despite the much closer similarities to the GTX 660 NVIDIA is placing the card into their GTX 650 family and pitching it as a higher performance alternative to the GTX 650. With that in mind, what exactly does NVIDIA’s final desktop consumer launch of 2012 bring to the table? Let’s find out.

| GTX 660 | GTX 650 Ti | GTX 650 | GT 550 Ti | |

| Stream Processors | 960 | 768 | 384 | 192 |

| Texture Units | 80 | 64 | 32 | 32 |

| ROPs | 24 | 16 | 16 | 16 |

| Core Clock | 980MHz | 925MHz | 1058MHz | 900MHz |

| Boost Clock | 1033MHz | N/A | N/A | N/A |

| Memory Clock | 6.008GHz GDDR5 | 5.4GHz GDDR5 | 5GHz GDDR5 | 4.1GHz GDDR5 |

| Memory Bus Width | 192-bit | 128-bit | 128-bit | 192-bit |

| VRAM | 2GB | 1GB/2GB | 1GB | 1GB |

| FP64 | 1/24 FP32 | 1/24 FP32 | 1/24 FP32 | 1/12 FP32 |

| TDP | 140W | 110W | 64W | 116W |

| GPU | GK106 | GK106 | GK107 | GF116 |

| Transistor Count | 2.54B | 2.54B | 1.3B | 1.17B |

| Manufacturing Process | TSMC 28nm | TSMC 28nm | TSMC 28nm | TSMC 40nm |

| Launch Price | $229 | $149 | $109 | $149 |

Coming from the GTX 660 and its fully enabled GK106 GPU, NVIDIA has cut several features and functional units in order to bring the GTX 650 Ti down to their desired TDP and price. As is customary for lower tier parts, GTX 650 Ti ships with a binned GK106 GPU with some functional units disabled, where it unfortunately takes a big hit. For the GTX 650 Ti NVIDIA has opted to disable both SMXes and ROP/L2/memory clusters, with a greater emphasis on the latter.

On the shader side of the equation NVIDIA is disabling just a single SMX, giving GTX 650 Ti 768 CUDA cores and 64 texture units. On the ROP/L2/memory side of things however NVIDIA is disabling one of GK106’s three clusters (the minimum granularity for such a change), so coming from the GTX 660 the GTX 650 Ti will have much less memory bandwith and ROP throughput than its older sibling.

Taking a look at clockspeeds, along with the reduction in functional units there has also been a reduction in clockspeeds across the board. The GTX 650 Ti will ship at 925MHz, 65MHz lower than the GTX 660 Ti. Furthermore NVIDIA has decided to limit GPU boost functionality to the GTX 660 and higher families, so the GTX 650 Ti will actually run at 925MHz and no higher. The lack of a boost clock means the effective difference is closer to 100MHz. On the other hand the lack of min-maxing here by NVIDIA will have some good ramifications for overclocking, as we’ll see. Meanwhile the memory clock will be at 5.4GHz, which at only 600MHz below NVIDIA’s standards-bearer Kepler memory clock of 6GHz is not nearly as big as the loss of memory bandwidth from the memory bus width reduction.

Overall this gives the GTX 650 Ti approximately 72% of the shading/texturing performance, 60% of the ROP throughput, and 60% of the memory bandwidth of the GTX 660. Meanwhile compared to the GTX 650 the GTX 650 Ti has 175% of shading/texturing performance, 108% of the memory bandwidth, and 87% of the ROP throughput of its smaller predecessor. For what little tradition there is, NVIDIA’s x50 parts are traditionally geared towards 1680x1050/1600x900 resolutions. And while NVIDIA is trying to stretch that definition due to the popularity of 1920x1080 monitors, the loss of the ROP/memory cluster all but closes the door on the GTX 650 Ti’s 1080p ambitions. The GTX 650 Ti will be for all intents and purposes NVIDIA’s fastest sub-1080p Kepler card.

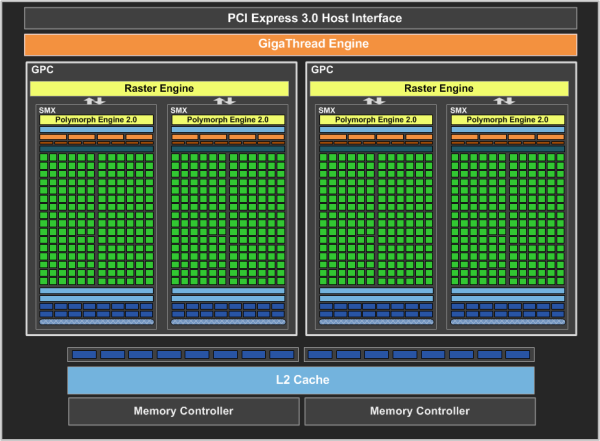

Moving on, it was interesting to find out that NVIDIA is not going to be disabling SMXes for the GTX 650 Ti in a straightforward manner. Because of GK106’s asymmetrical design and the pigeonhole principle – 5 SMXes spread over 3 GPCs – NVIDIA is going to be shipping GTX 650 Ti certified GPUs with both 2 GPCs and 3 GPCs, depending on which GPC houses the defective SMX that NVIDIA will be disabling. To the best of our knowledge this is the first time NVIDIA has done something like this, particularly since Fermi cards had far more SMs per GPC. Despite the fact that 3 GPC versions of the GTX 650 Ti should technically have a performance advantage due to the extra Raster Engine, NVIDIA tells us that the performance is virtually identical to the 2 GPC version. Ultimately since GTX 650 Ti is going to be ROP bottlenecked anyhow – and hence lacking the ROP throughput to take advantage of that 3rd Raster Engine – the difference should be just as insignificant as NVIDIA claims.

Meanwhile when it comes to power consumption the GTX 650 Ti is being given a TDP of 110W, some 30W lower than the GTX 660. Even compared to the GTX 550 Ti this is still a hair lower (116W vs. 110W), while the gap between the GTX 650 Ti and GTX 650 will be 34W. Idle power consumption on the other hand will be virtually unchanged, with the GTX 650 Ti maintaining the GTX 660’s 5W standard.

As NVIDIA’s final consumer desktop GeForce 600 card for the year, NVIDIA is setting the MSRP of the 1GB card at $150, between the $109 GTX 650 and the $229 GTX 660. This is another virtual launch, with partners going ahead with their own designs from the start. NVIDIA’s reference design will not be directly sold, but most of the retail boards will be very similar to NVIDIA’s reference card anyhow, implementing a single-fan open air cooler like NVIDIA’s. PCBs should also be similar; 2 of the 3 retail cards we’re looking at use the reference PCB, which on a side note is identical to the GTX 650 reference PCB as GTX 650 Ti and GTX 650 are pin compatible. Meanwhile similar to the GTX 660 Ti launch, partners will be going ahead with a mix of memory capacities, with many partners offering both 1GB and 2GB cards.

At launch the GTX 650 Ti will be facing competition from both last-generation GeForce cards and current-generation Radeon cards. The GeForce GTX 560 is currently going for almost exactly $150, making it direct competition for the GTX 650 Ti. The 560 cannot match the GTX 650 Ti’s power consumption, but thanks to its ROP performance and memory bandwidth it’s a potent competitor for rendering performance.

Meanwhile the Radeon competition will be the tag-team of the 7770 and the 7850. The 7770 is not nearly as powerful as the GTX 650 Ti, but with prices at-or-below $119 it significantly undercuts the GTX 650 Ti. Meanwhile the Pitcairn based 7850 1GB can more than give the GTX 650 Ti a run for its money, but is priced on average $20 higher at $169, and as the 1GB version is a bit of a niche product for AMD the selection the card selection won’t be as great.

To sweeten the deal NVIDIA has a new game bundle promotion starting up for the GTX 650 Ti. Retailers will be bundling vouchers for Assassin’s Creed III with GTX 650 Ti cards in North America and in Europe. Unreleased games tend to be good deals value-wise, but in the case of Assassin’s Creed III this also means waiting nearly 2 months for the PC version of the game to ship.

| Fall 2012 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon HD 7950 | $329 | ||||

| $299 | GeForce GTX 660 Ti | ||||

| Radeon HD 7870 | $239/$229 | GeForce GTX 660 | |||

| Radeon HD 7850 2GB | $189 | ||||

| Radeon HD 7850 1GB | $169 | GeForce GTX 650 Ti 2GB | |||

| $149 | GeForce GTX 650 Ti 1GB | ||||

| Radeon HD 7770 | $109 | GeForce GTX 650 | |||

| Radeon HD 7750 | $99 | GeForce GT 640 | |||

91 Comments

View All Comments

Hades16x - Tuesday, October 9, 2012 - link

A little bit saucy while reading this review on the page "Meet the Gigabyte Gefore GTX 650 TI OC 2GB Windforce" the second to last paragraph reads:"Rounding out the package is the usual collection of power adapters and a quick start guide. While it’s not included in the box or listed on the box, the Gigabyte GeForce GTX 660 Ti OC...."

Shouldn't that read "the Gigabyte GeForce GTX 650 Ti OC" ?

Thanks for the review Ryan!

Hrel - Tuesday, October 9, 2012 - link

First, card makers: If the card doesn't have FULL size HDMI, I won't even consider it. I get mini on smartphones, makes no damn sense on a GPU that goes into a 20lb desktop. Fuck everyone who does that. Second, Every display I own uses HDMI, most of them ONLY use HDMI. I want to see cards with 3 or 4 HDMI ports on them so I can run 3/4 displays without having to chain together a bunch of fucking adapters. HDMI or GTFO. I really don't understand why any other video cable even exists anymore, DVI is dumb and old, VGA, psh. Display Port? Never even seen it on a monitor/TV. I don't spend stupid amounts of money on stupid resolution displays where NO media is even produced at that resolution; but last I checked HDMI supports 8K video.Next: I bought my GTX460 for 130, or 135 bucks. This was a few months after it was released and with a rebate and weekend sale on newegg. Still, that card can MAX out every game I play at 1080p with no issues. I get that they're putting more RAM in the cards now, but that can't really justify more than a 10$ difference; of actual cost. I don't see the GTX660 EVER dropping down to 150 bucks or lower, WTF? Why is the GPU industry getting DRAMATICALLY more expensive and no one seems to be saying a thing? Remember the system RAM price fixing thing? Yeah, that sucked didn't it. I'd really hate to see that happen to GPU's.

It's good to finally see a tangible improvement in performance in GPU's. From GT8800 to GTX560 improvements were very incremental; seems like an actual gain has been achieved beyond just generational improvements. Hoping consoles have at least 2GB of GDDR5 and at least 4GB of DDR3 system RAM for next gen. Seems like RAM is becoming much more important, based on Skyrim. With that said, I can buy 8GB of system ram for like 30 or 40 bucks. Puts actual cost at a few dollars. No reason at all these cards/consoles can't have shit tons of RAM all over the damn place. RAM is cheap, doesn't cost anything anymore. You can charge 10 bucks/4GB and still turn a stupid profit. Do the right thing Microsoft/Nvidia and everyone else; put shit tons of RAM in AT COST. Make money on the GPU/Console/Games.

maximumGPU - Wednesday, October 10, 2012 - link

we should all be pushing and asking for royalty-free display ports!and just so you'd know quite a few high end monitors don't have hdmi, the dell ultrasharp U2312hm comes to mind.

DP should be the standard.

Hrel - Thursday, October 11, 2012 - link

DP doesn't support audio, as far as I know. Also offers no advantage at all for video. So why?maximumGPU - Thursday, October 11, 2012 - link

It does support audio!with all else being equal the fact that it's royalty free means it's preferable to hdmi.

TheJian - Wednesday, October 10, 2012 - link

I'm not sure of physx in AC3, but yeah odd they put this in there. I would have figured a much cheaper game. When you factor in phsyx in games like Borderlands2 it changes the game quite a lot. You can interact with object in a way you can't on AMD:"One of the cool things about PhysX is that you can interact with these objects. In this screenshot we are firing a shot at the flag. The bullets go through the flag, causing it to blow a hole in the middle of it. After the actual flag tears apart, the entire string of flags fell down. This happens with flags and other cloth objects that are hanging around, the "Porta-John's" that are scattered across the world, blood and explosive objects. You can not destroy any of these objects without PhysX enabled on at least Medium. "

http://hardocp.com/article/2012/10/01/borderlands_...

I don't know why more sites don't talk about the physx stuff. I also like hardocp ALWAYS showing minimums as that is more important than anything else IMHO. I need to know a game is playable or not, not that it can hit 100fps here and there. Their graphs always show how LONG they stay low also. Much more useful info than a max fps shot in time (or even avg to me, I want min numbers). Anandtech only puts mins in where it makes an AMD look good it seems. Not sure other than that why they wouldn't include them in EVERY game with a graph like hardocp showing how long their there. If you read hardocp it's because they dip a lot, but maybe I'm just a cynic. At least they brought back SC2 :) Cuda is even starting to be used in games like just cause 2 (for water).

http://www.geforce.com/games-applications/pc-games...

Interesting :)

jtenorj - Wednesday, October 10, 2012 - link

You can run medium physx on a radeon without much loss of performance.Magnus101 - Wednesday, October 10, 2012 - link

Why suddenly the race for 60 FPS?It used to be 30 FPS average and minimums not going under 18 in Crysis that was considered good.

Movies are at 24 FPS and stuttering isn't recognisable until you hit 16-17 FPS.

Pal TV in Europe was at 25 FPS.

It looks like everybody is buying into Carmacks 60 FPS mantra, which is insane.

For me minimums above 20 FPS is enough for a game to be perfectly playable.

This is the snobby debate with audiphiles all over again where they swear they can tell the difference between 96 and 44.1 khz, just substitue the samplerate with FPS.

But I guess the Nvidia and ATI are happy that you for no reason just raise the bar of acceptance!

ionis - Wednesday, October 10, 2012 - link

60 FPS has been the target for the past 3-4 years. I'm happy with 25-30 but this min 30 ave 60 FPS target has been going on for quite a while now.CeriseCogburn - Friday, October 12, 2012 - link

You'll be happy until you play the same games on a cranked SB system with a high end capable videocard and an SSD (on a good low ping connection if multi).Until then you have no idea what you are missing. You're telling yourself there isn't more, but there is a lot, lot more.

Quality

Fluidity

Immersion

Perception in game

Precision

Timing

CONTROL of your game.

Yes it is snobby to anyone lower than whatever the snob build is - well, sort of, because the price to get there is not much at all really.

You may not need it, you may "be fine" with what you have, but there is exactly zero chance there is isn't a huge, huge difference.