The Intel Skylake i7-6700K Overclocking Performance Mini-Test to 4.8 GHz

by Ian Cutress on August 28, 2015 2:30 PM ESTCPU Tests on Windows: Professional

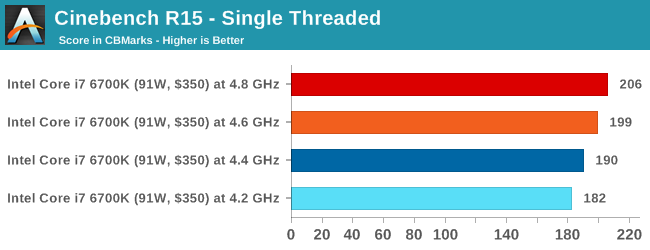

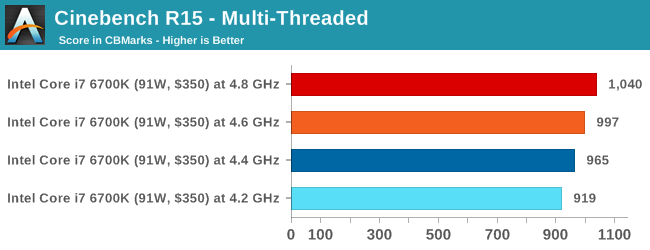

Cinebench R15

Cinebench is a benchmark based around Cinema 4D, and is fairly well known among enthusiasts for stressing the CPU for a provided workload. Results are given as a score, where higher is better.

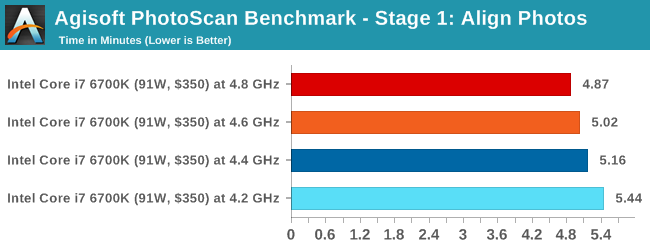

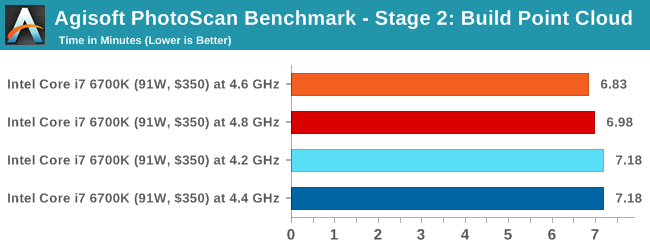

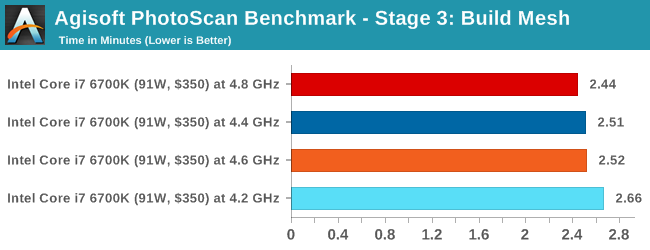

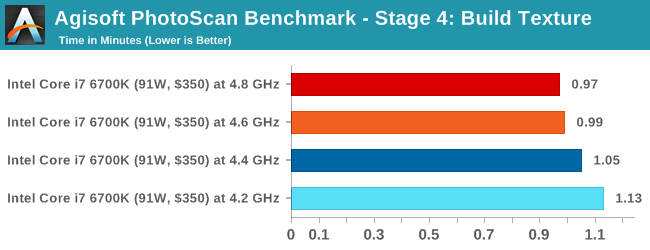

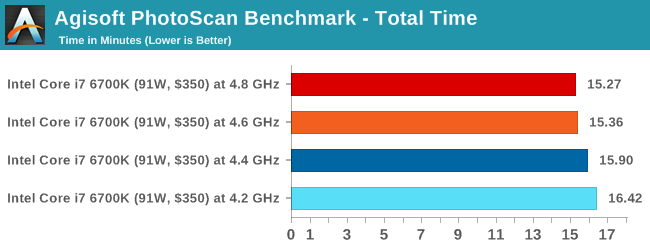

Agisoft Photoscan – 2D to 3D Image Manipulation: link

Agisoft Photoscan creates 3D models from 2D images, a process which is very computationally expensive. The algorithm is split into four distinct phases, and different phases of the model reconstruction require either fast memory, fast IPC, more cores, or even OpenCL compute devices to hand. Agisoft supplied us with a special version of the software to script the process, where we take 50 images of a stately home and convert it into a medium quality model. This benchmark typically takes around 15-20 minutes on a high end PC on the CPU alone, with GPUs reducing the time.

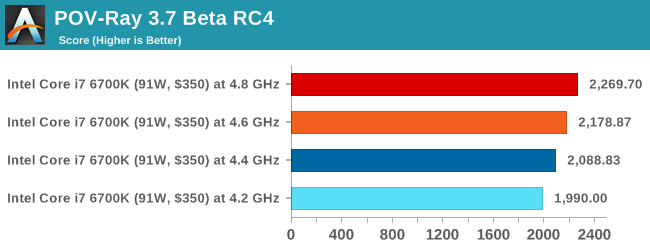

Rendering – PovRay 3.7: link

The Persistence of Vision RayTracer, or PovRay, is a freeware package for as the name suggests, ray tracing. It is a pure renderer, rather than modeling software, but the latest beta version contains a handy benchmark for stressing all processing threads on a platform. We have been using this test in motherboard reviews to test memory stability at various CPU speeds to good effect – if it passes the test, the IMC in the CPU is stable for a given CPU speed. As a CPU test, it runs for approximately 2-3 minutes on high end platforms.

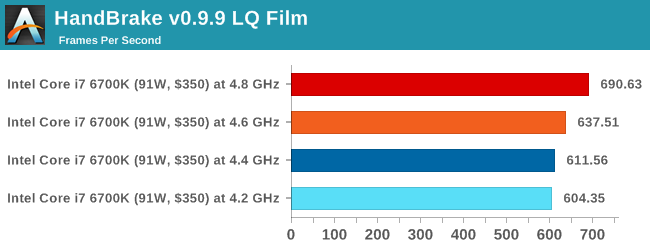

HandBrake v0.9.9 LQ: link

For HandBrake, we take a 2h20 640x266 DVD rip and convert it to the x264 format in an MP4 container. Results are given in terms of the frames per second processed, and HandBrake uses as many threads as possible.

Conclusions on Professional Performance

In all of our professional level tests, the gain from the overclock is pretty much as expected. Photoscan sometimes offers a differing perspective, but this is partly due to some of the randomness of the implementation code between runs but also it affords a variable thread load depending on which stage. Not published here are the HandBrake results running at high quality (double 4K), because it actually failed at 4.6 GHz and above. There is a separate page addressing this stability issue at the end of this mini-review.

103 Comments

View All Comments

bill.rookard - Friday, August 28, 2015 - link

I wonder if not having the FIVR on-die has to do with the difference between the Haswell voltage limits and the Skylake limits?Communism - Friday, August 28, 2015 - link

Highly doubtful, as Ivy Bridge has relatively the same voltage limits.Morawka - Saturday, August 29, 2015 - link

yea thats a crazy high voltage.. that was even high for 65nm i7 920'skuttan - Sunday, August 30, 2015 - link

i7 920 is 45nm not 65nmCellar Door - Friday, August 28, 2015 - link

Ian, so it seems like the memory controller - even though capable of driving DDR4 to some insane frequencies seems to error out with large data sets?It would interesting to see this behavior with Skylake and DDR3L.

Also it would be interesting to see in the i56600k, lacking the hyperthreading would run into same issues.

Communism - Friday, August 28, 2015 - link

So your sample definitively wasn't stable above 4.5ghz after all then.......Haswell/Broadwell/Skylake dud confirmed. Waiting for Skylake-E where the "reverse hyperthreading" will be best leveraged with the 6/8 core variant with proper quad channel memory bandwidth.

V900 - Friday, August 28, 2015 - link

Nope, it was stable above 4.5 Ghz...And no dud confirmed in Broadwell/Skylake.

There is just one specific scenario (4K/60 encoding) where the combination of the software and the design of the processor makes overclocking unfeasible.

Not really a failure on Intels part, since it's not realistic to expect them to design a mass-market CPU according to the whims of the 0.5% of their customers who overclock.

Gigaplex - Saturday, August 29, 2015 - link

If you can find a single software load that reliably works at stock settings, but fails at OC, then the OC by definition is not 100% stable. You might not care and are happy to risk using a system configured like that, but I sure as hell wouldn't.Oxford Guy - Saturday, August 29, 2015 - link

Exactly. Not stable is not stable.HollyDOL - Sunday, August 30, 2015 - link

I have to agree... While we are not talking about server stable with ECC and things, either you are rock stable on desktop use or not stable at all. Already failing on one of test scenarios is not good at all. I wouldn't be happy if there were some hidden issues occuring during compilations, or after few hours of rendering a scene... or, let's be honest, in the middle of gaming session with my online guild. As such I am running my 2500k half GHz lower than stability testing shown as errorless. Maybe it's excessively much, but I like to be on a safe side with my OC, especially since the machine is used for wide variety of purposes.